Efficient Asymmetric Co-Tracking using Uncertainty Sampling

2017 IEEE International Conference on Signal and Image Processing Applications, Kuching, Malaysia, Sep 12-Sep 14, 2017

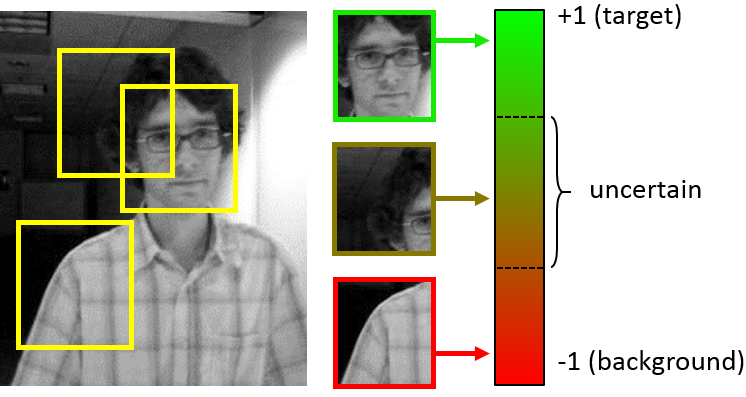

Adaptive tracking-by-detection approaches are popular for tracking arbitrary objects. They treat the tracking problem as a classification task and use online learning techniques to update the object model. However, these approaches are heavily invested in the efficiency and effectiveness of their detectors. Evaluating a massive number of samples for each frame (e.g., obtained by a sliding window) forces the detector to trade the accuracy in favor of speed. Furthermore, misclassification of borderline samples in the detector introduce accumulating errors in tracking. In this study, we propose a co-tracking based on the efficient cooperation of two detectors: a rapid adaptive exemplar-based detector and another more sophisticated but slower detector with a long-term memory. The sampling labeling and co-learning of the detectors are conducted by an uncertainty sampling unit, which improves the speed and accuracy of the system. We also introduce a budgeting mechanism which prevents the unbounded growth in the number of examples in the first detector to maintain its rapid response. Experiments demonstrate the efficiency and effectiveness of the proposed tracker against its baselines and its superior performance against state-of-the-art trackers on various benchmark videos.

Overview

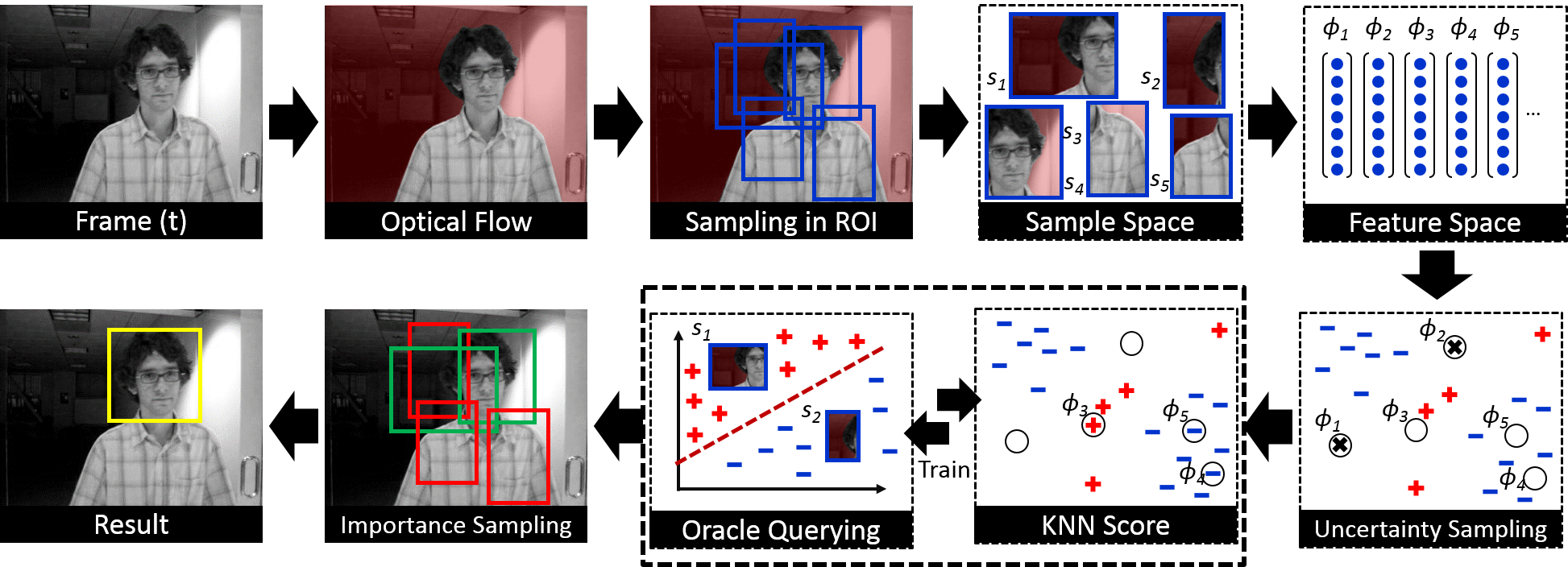

In this study, we propose an efficient co-tracking framework in which an active learning unit orchestrate the information exchange. It consists of a rapid detector with short-term memory, and an uncertainty sampling switcher that query the label of the most uncertain samples of the first detector from an accurate detector with a long-term memory (called the oracle). An importance sampling scheme combines the results of the two trackers and handles the scale variation of the target. An exemplar-based detector is employed as the rapid detector and we introduce a budgeting mechanism to prevent the unbounded growth in the number of examples in this detector to maintain its rapid response. In summary we

- employed active learning in co-tracking framework that leads to increasing the speed and generalization power of the tracker,

- actively control the memory of tracker by balancing between short- and long-term memories and

- introduced an intuitive budgeting method for the nearest neighbor classifier.

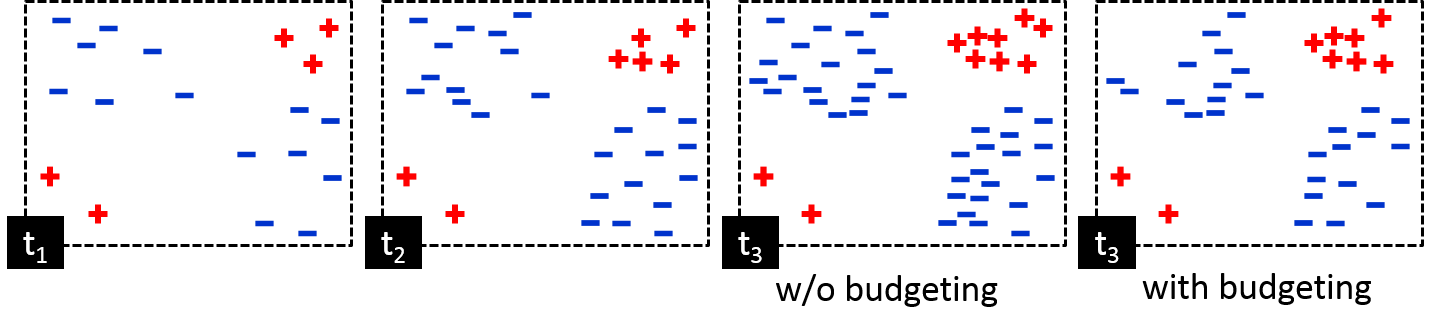

An ever growing example-space of a KNN and the budgeting mechanism to discard the most futile samples. Without budgeting, some samples are added/kept by the classifier that has no effect on the discrimination border.

Online learning of discriminative trackers has its own challenges. The sample size of most of the adaptive classifiers is constantly growing, making them slower by the time. For a KNN classifier, even with a robust KD-tree architecture, the computation cost rapidly increase over time. This is similar to the curse of kernelization, in which the number of support vectors increases with the amount of training data in kernel classifiers. To allow for real-time operation, there is a need to control the number of support vectors. Reducing the dataset for KNN classification has been studied in the literature (e.g., the condensed nearest neighbor \cite{angiulli2005fast}), yet it is not suitable for tracking in which the distribution of target and background is non-stationary, and there is a need to keep/remove the samples based on the temporal properties of tracking task.

We propose a scheme tends to discard the most futile samples from the sample pool while preserving the recent or essential ones. The following figure depicts the sample 2D feature space of a KNN classifier and demonstrate how this budgeting mechanism preserve the classification power while reducing the number of samples.

- Do not add the sample for which all the neighbors have similar label

- Attach a timer to each new sample

- Increment the timer of neighbors of a new samples if they have different label

- Decrement the timer of all samples when new data arrives

- If the timer of a sample expires remove it iff all its neighbors have similar labels

- Remove futile samples, provide forgetting mechanism for KNN to handle local minima

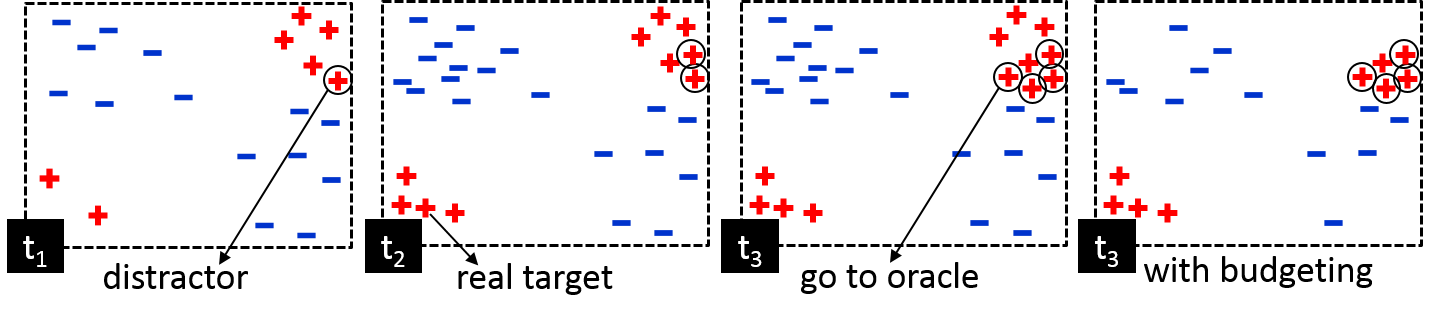

KNN local minima and the budgeting method that help solving it. The budgeting prevent the distractor to reinforce itself by influencing the neighbors and spread throughout the sample space.

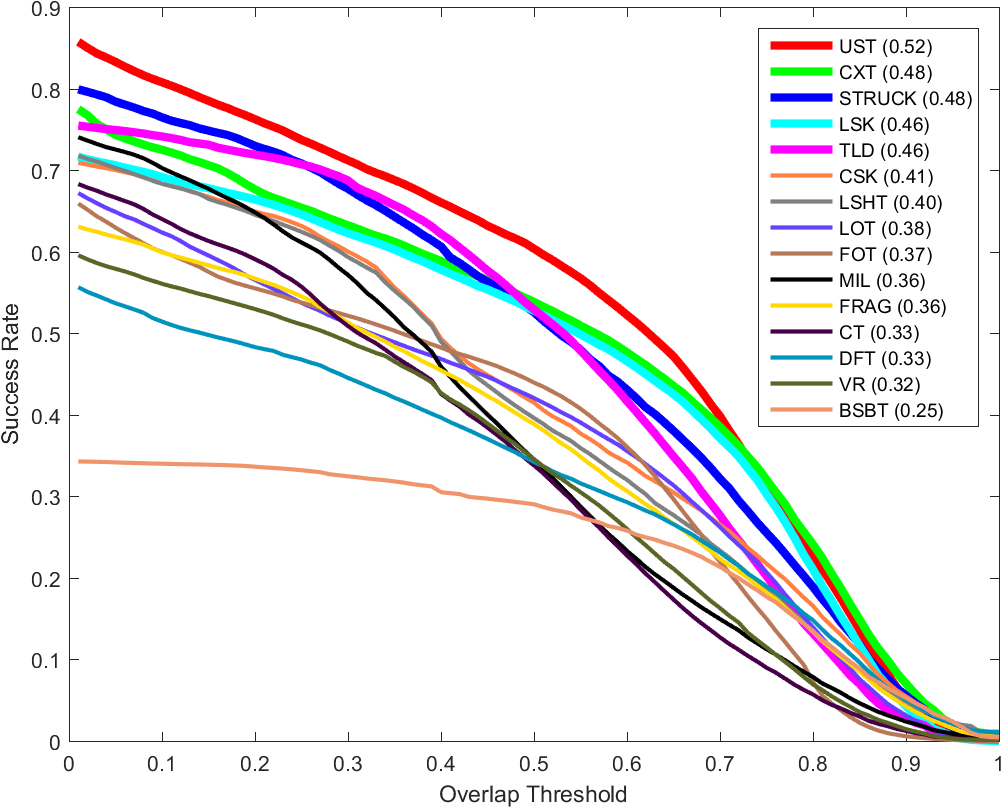

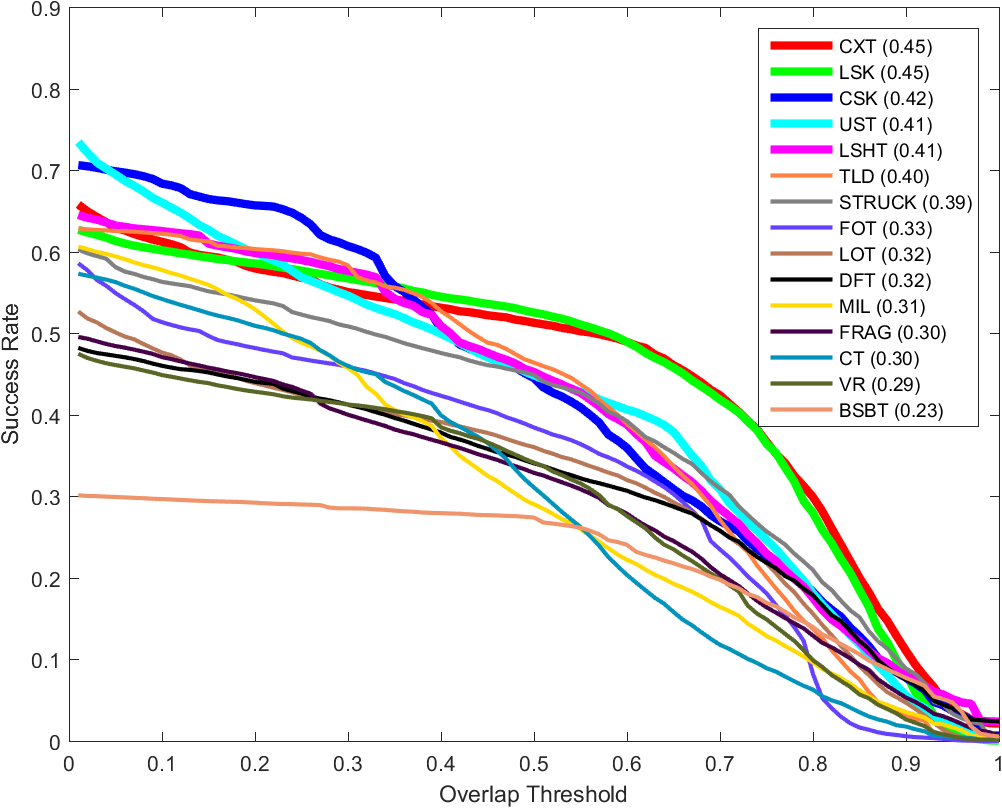

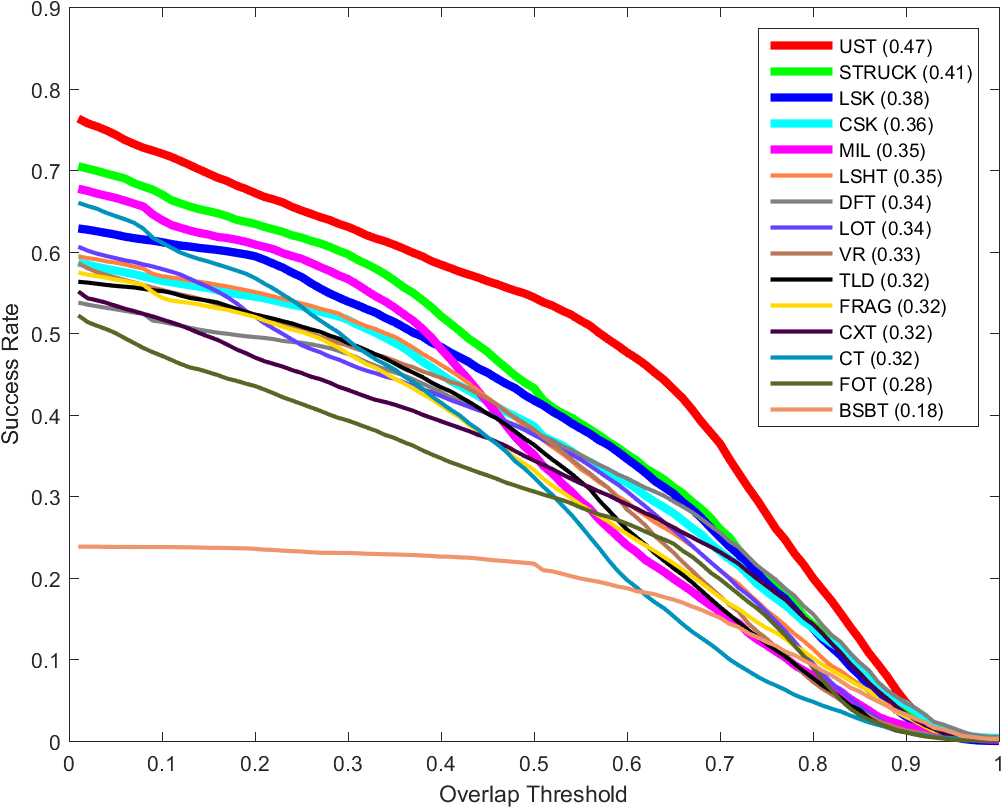

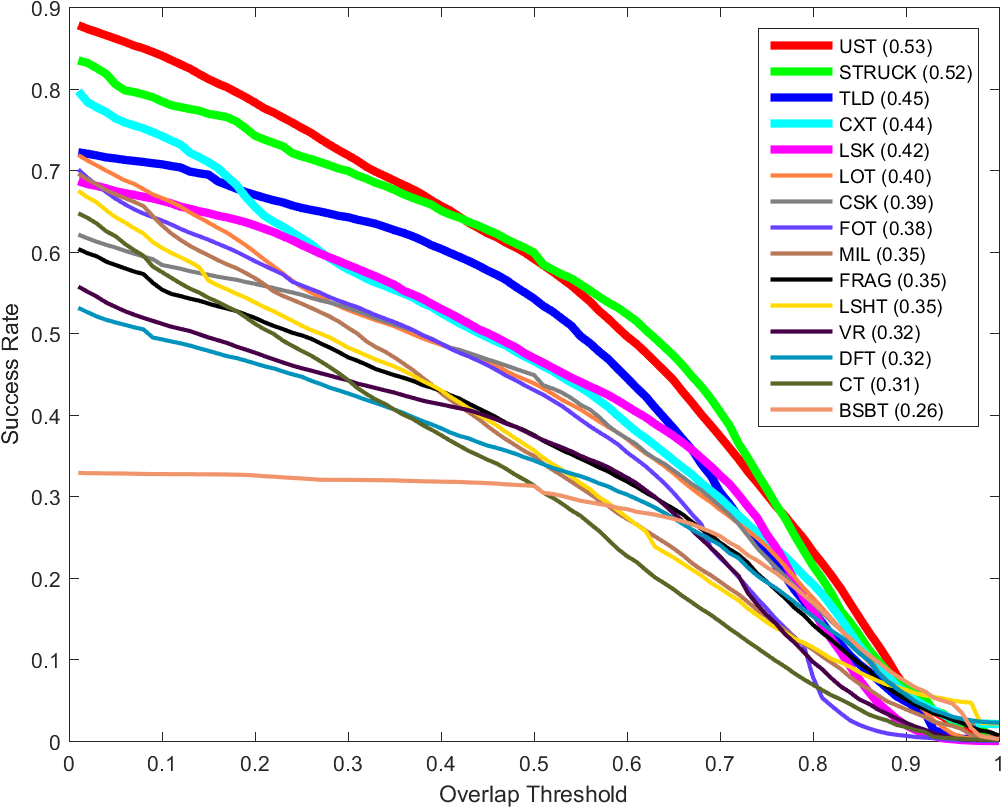

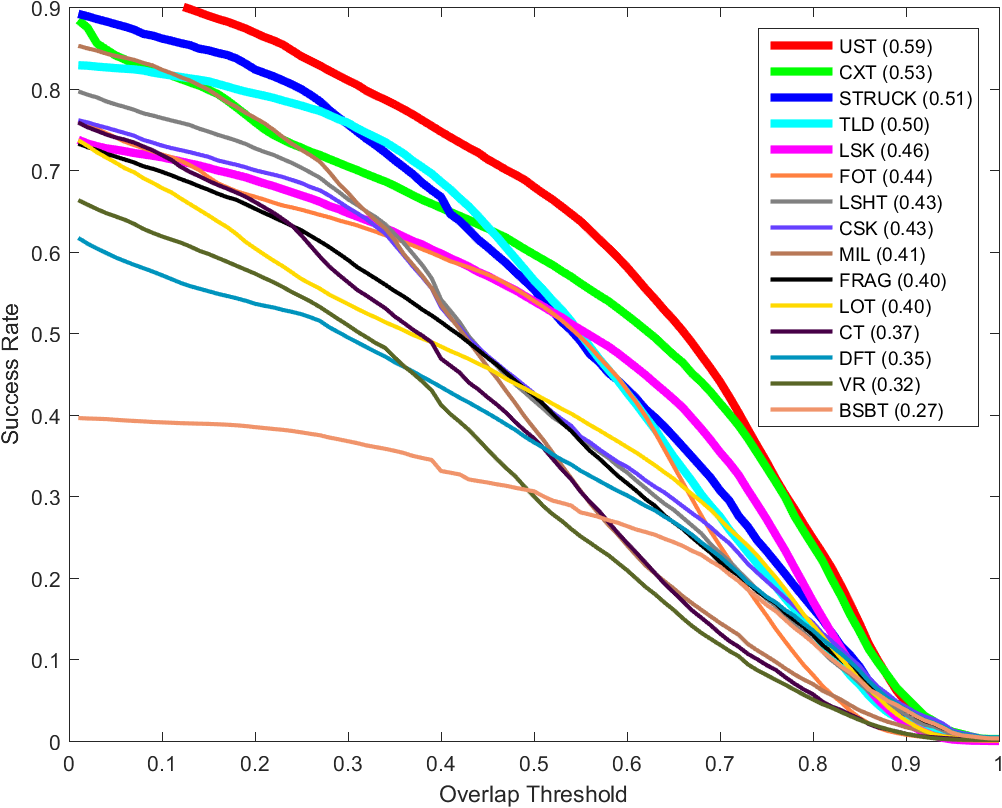

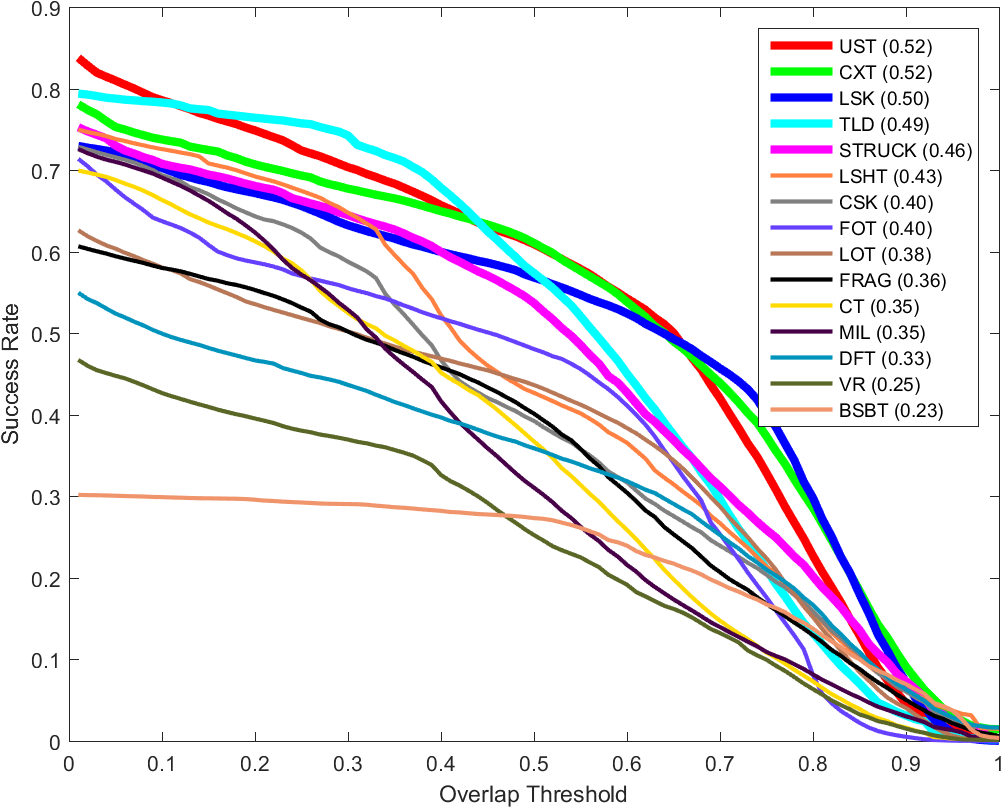

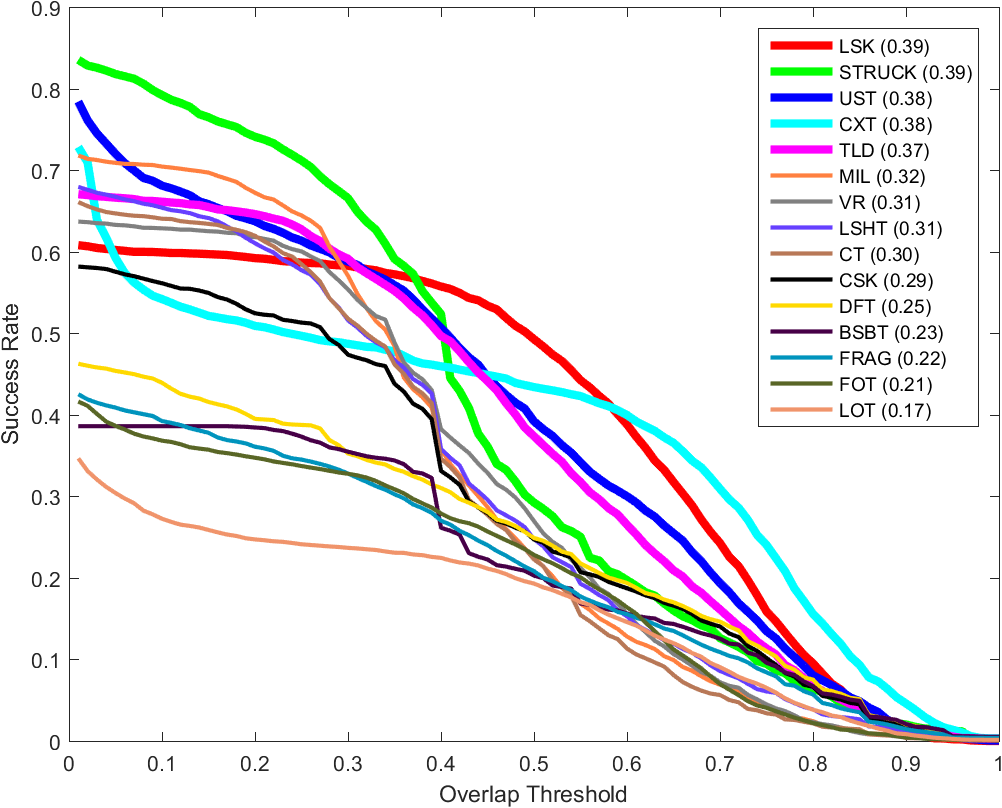

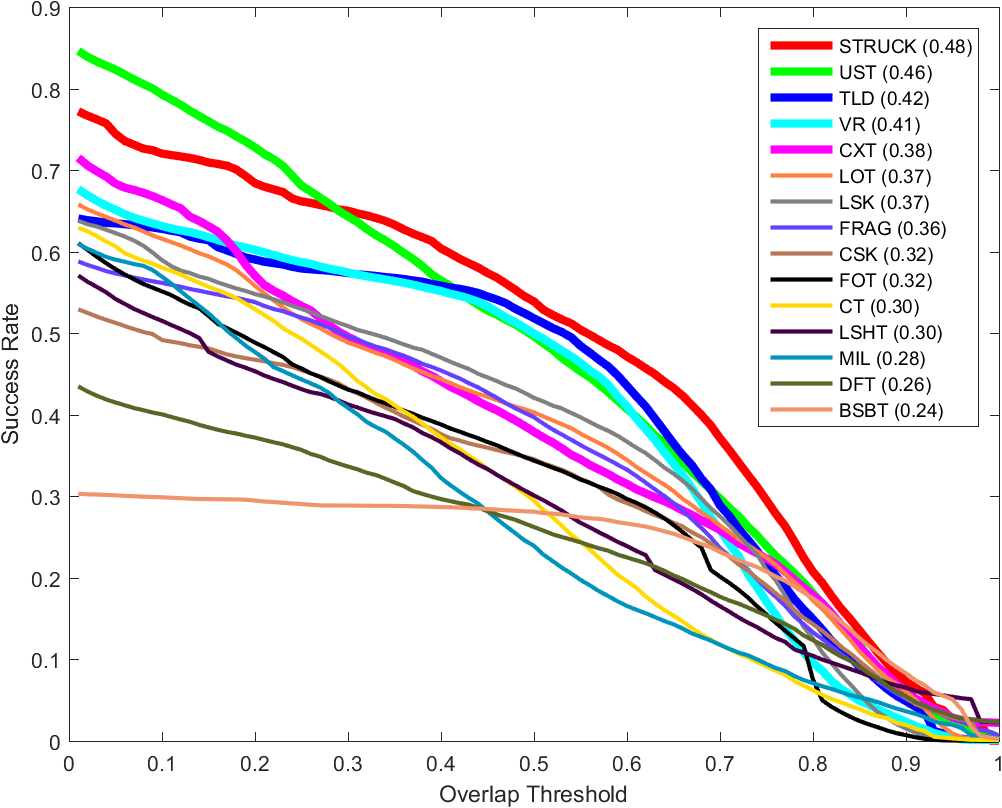

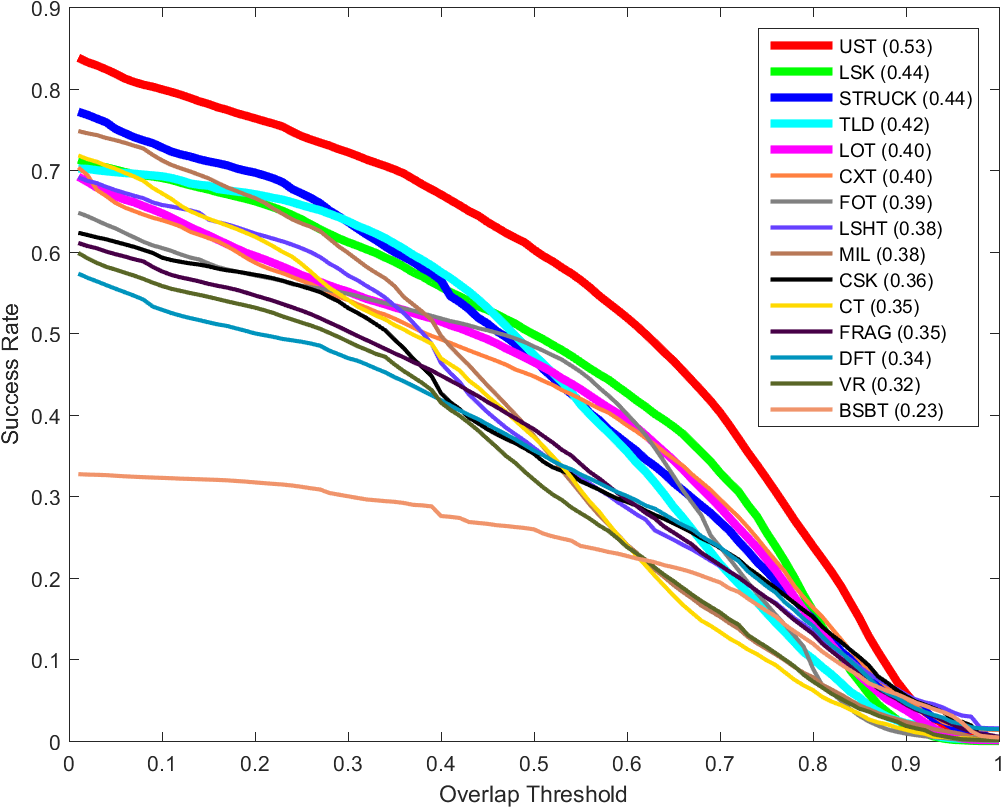

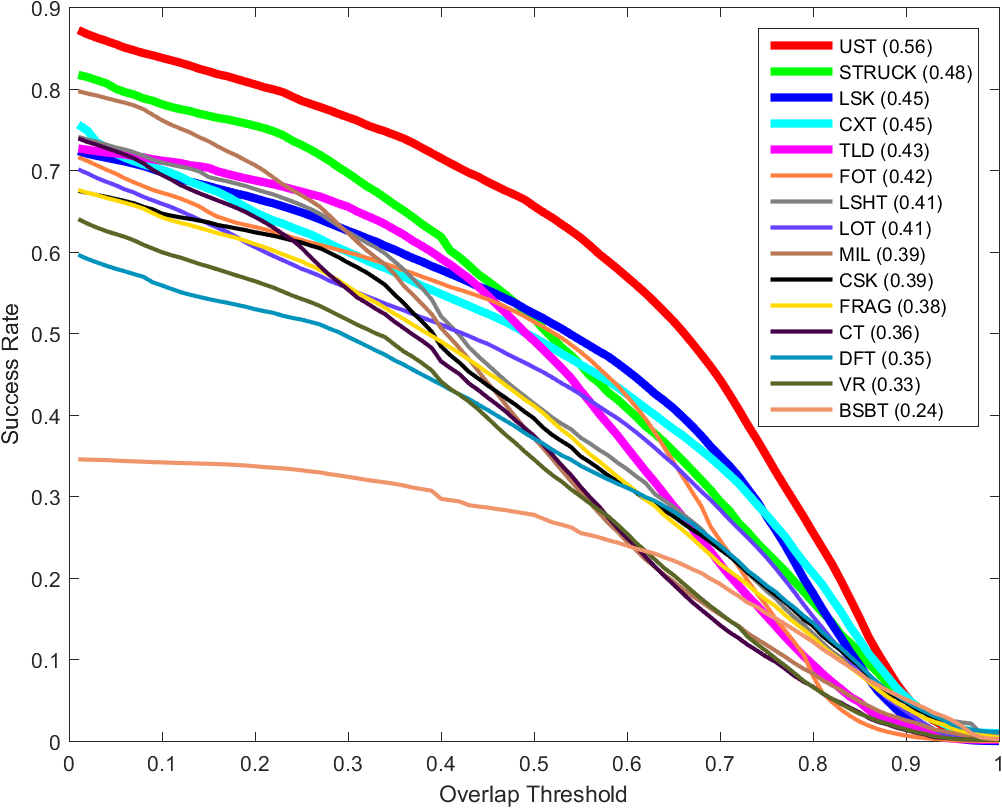

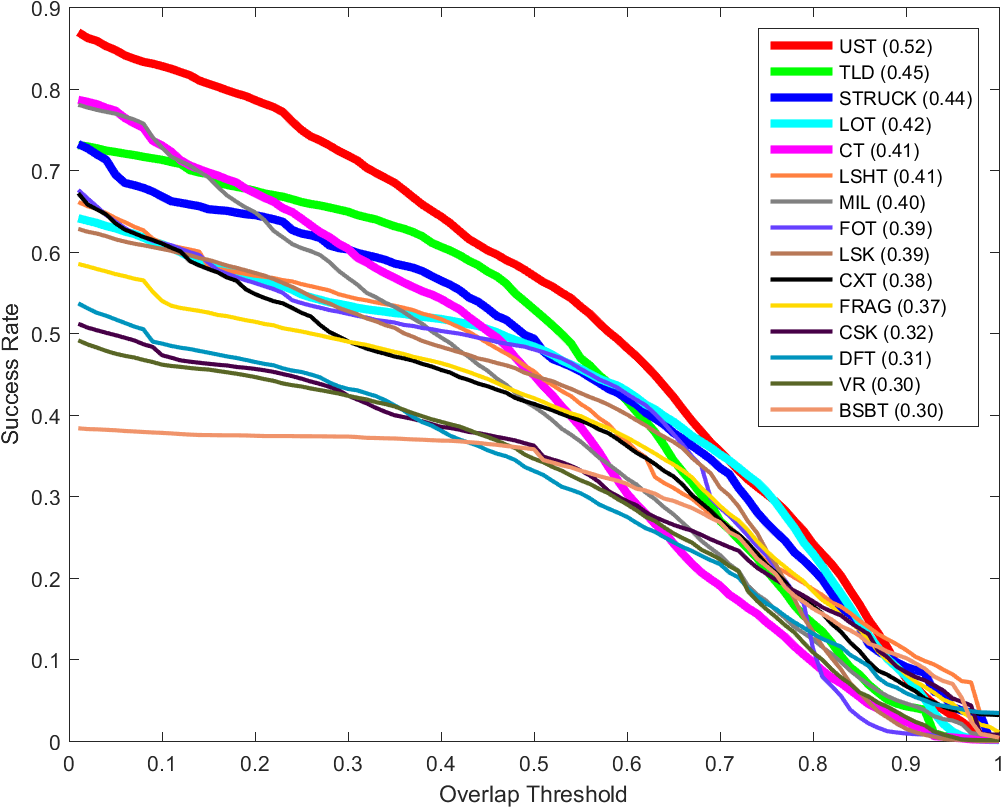

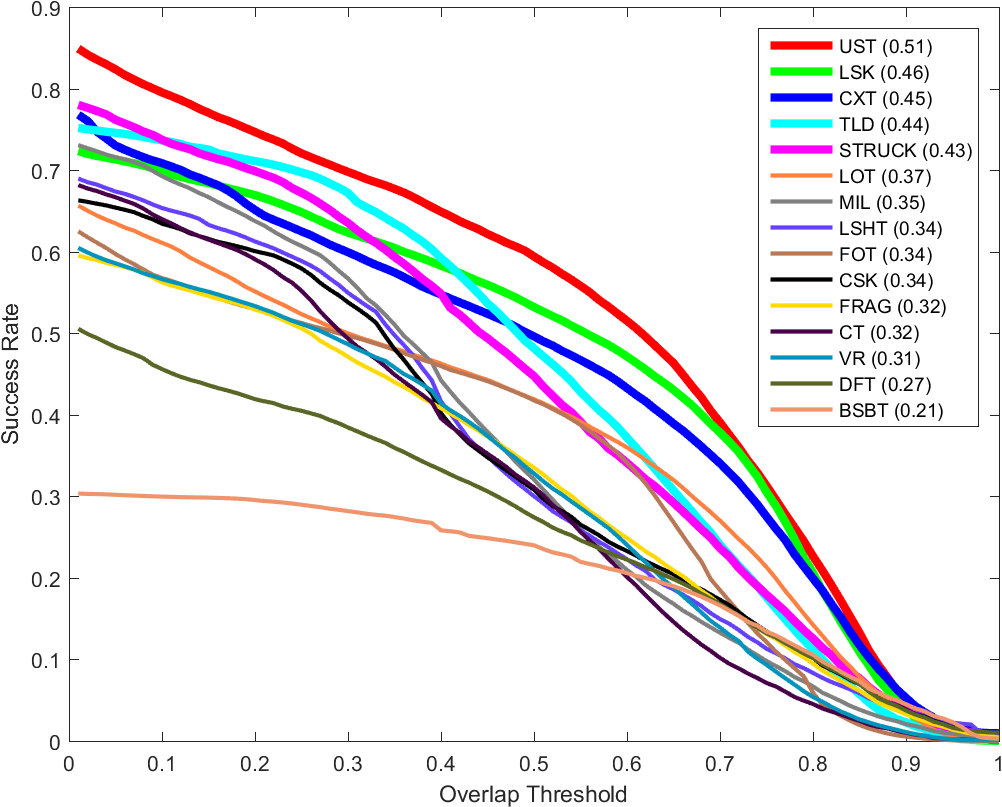

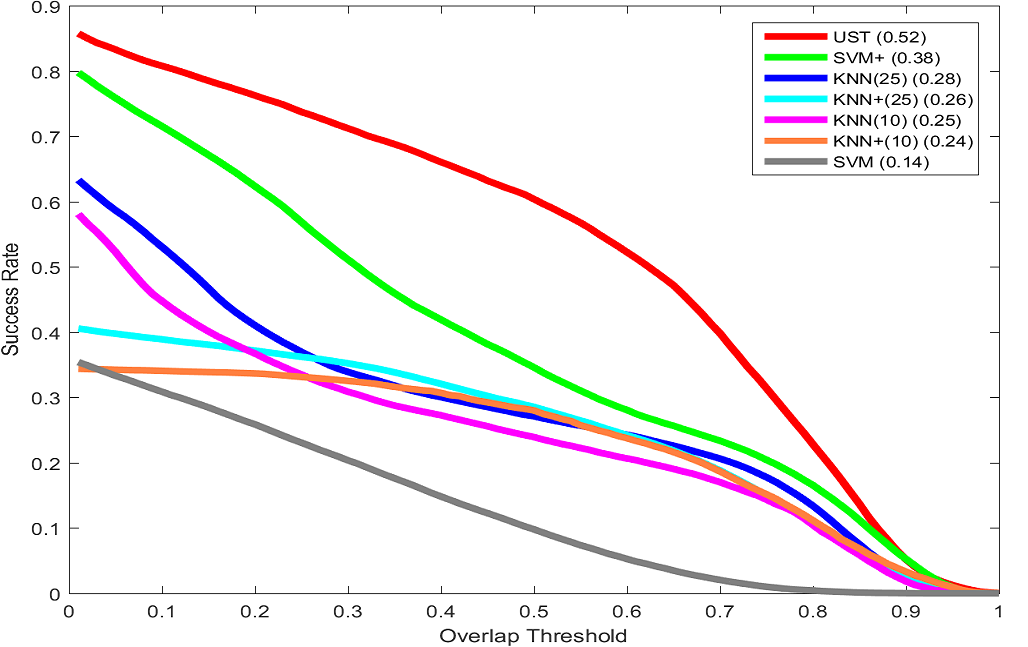

Success Plots

Other Experiments

This experiment strives to demonstrate the advantages of the proposed tracker in comparison to the trackers that consists of either of its detectors in isolation. To this end, we construct several trackers from the components of this tracker to serve as the baselines for this experiment. In all of these trackers, the ROI detection and input sampling are similar to the UST tracker. KNN(10) and KNN(25) trackers utilize only the feature-based nearest neighbor detector for the tracking with the neighborhood size of k=10 and k=25 respectively. KNN+(10) and KNN+(25) trackers additionally incorporate the proposed budgeting mechanism into their detector. SVM tracker employs the oracle to track the target whereas SVM+ include the classifier update in its framework.

BibTex reference

Download Citation , Google Scholar

@inproceedings{meshgi2017ust,

author={K. Meshgi and M. S. Mirzaei and S. Oba and S. Ishii},

booktitle={2017 IEEE International Conference on Signal and Image Processing Applications (ICSIPA)},

title={Efficient asymmetric co-tracking using uncertainty sampling},

year={2017},

volume={},

number={},

pages={241-246},

doi={10.1109/ICSIPA.2017.8120614},

ISSN={},

month={Sept},

}

Acknowledgements

This article is based on results obtained from a project commissioned by the New Energy and Industrial Technology Development Organization (NEDO).

For more information or help please email meshgi-k [at] sys.i.kyoto-u.ac.jp.